Animal vocalizations have seen a huge boom in research over the past few decades. Advances in recording equipment and analysis techniques have led to new insights into animal behavior, population distribution, taxonomy, and anatomy.

In a new study published in Ecology and Evolution, we show the limitations of one of the most common methods used to analyze animal vocalizations. These limitations may also lead to disagreements about whale song in the Indian Ocean and the calls of land animals.

We present a new method that can overcome this problem. It lays the groundwork for future advances in animal vocal research, revealing previously hidden details of animal calls.

The meaning of the whale song

More than a quarter of whale species are listed as vulnerable, endangered or threatened. Understanding whale behavior, population distribution, and the effects of human-made noise is key to successful conservation efforts.

For creatures that spend most of their time hiding in the open ocean, it is difficult to study, but analyzing the songs of whales can give us important information.

However, we cannot simply analyze whale songs by listening to them – we need ways to measure them in more detail than the human ear can provide.

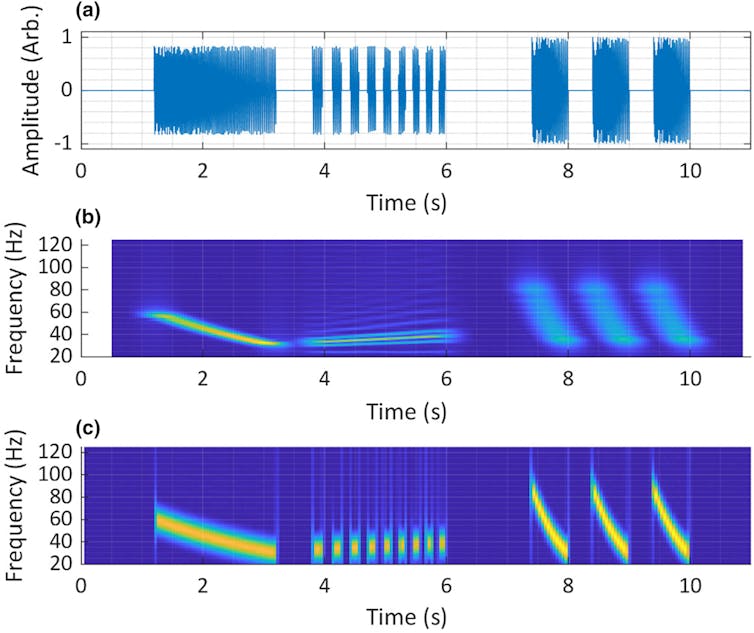

For this reason, the first step in studying animal vocalizations is to create a visualization called a spectrogram. This can give us a better idea of the character of the sound. Specifically, it shows when the energy in the sound occurs (temporal detail) and at what frequency (spectral detail).

By carefully examining these spectrograms and measuring them with other algorithms, we can learn the structure of the sound in terms of time, frequency, and intensity. They are also a key tool in reporting results in the publication of our work.

Why are spectrograms limited?

The most common method of generating spectrograms is called STFT. It is used in many fields, including mechanical engineering, biomedical engineering, and experimental physics.

However, it is recognized that it has a major limitation – it cannot accurately visualize all the temporal and spectral details of a sound at the same time. This means that each STFT spectrogram sacrifices some temporal or spectral information.

This problem is more noticeable at lower frequencies. This makes it especially difficult to analyze the sounds of animals like the blue whale, whose song is so low-pitched that it approaches the lower limit of human hearing.

Prior to my PhD, I worked in the field of acoustics and audio signal processing, where I became very familiar with the STFT spectrogram and its drawbacks.

However, there are different methods of generating spectrograms. I thought that STFTs used in whale song studies might obscure some details and that there might be other methods better suited to the task.

Yankovic and Rogers, 2024

In our study, my co-author Tracy Rogers and I compared STFT to new visualization techniques. We used artificial (synthetic) test signals as well as recordings of blue whales, Asian elephants, and other animals such as cassowaries and American crocodiles.

The methods we tested included a new algorithm called the Superlet transform, which we adapted from its original use in brain wave analysis. We found that this method produced visualizations of our synthetic test signal with up to 28% fewer errors than others we tested.

A good way to visualize animal sounds

This result was promising, but Superlet revealed its full potential when we applied it to animal sounds.

Recently, there has been some controversy surrounding the song of the Chagos pygmy blue whale: whether its first sound is “pulsed” or “tonal.” These two terms have complementary frequencies in sound, but are produced in two different ways.

STFT spectrograms do not resolve this dispute, as they can represent this sound as either pulsed or tonal, depending on how they are configured. Our Superlet visualization shows sound as a pulse, and this is consistent with most studies describing song.

When visualizing the roar of an Asian elephant, Superlet showed an impulse that was mentioned in the original description of the sound, but which was absent in all subsequent descriptions. Also it was never shown in the spectrogram.

Our Superlet visualization of both the southern cassowary call and the American crocodile croak revealed previously unreported temporal details that were not represented in spectrograms from previous studies.

These are preliminary conclusions based on one record only. To confirm these observations, more sounds will need to be analyzed. However, this is a good place for future work.

Ease of use may be Superlet’s greatest strength, even beyond improved accuracy. Many researchers who use sound to study animals have backgrounds in ecology, biology, and veterinary science. They learn to analyze the audio signal only as a means to an end.

To improve the accessibility of the Superlet transformation to these researchers, we have included it in a free, easy-to-use, open-source software application. We look forward to seeing what new discoveries they can make using this exciting new approach.

#technology #helps #find #hidden #information #calls #whales #cassowaries #barely #audible #animals